[A piece of work with Hans Stokking from TNO]

Cross-device immersive media has been one of my main research topics for many years. To get the “immersion”, we inevitably need a mechanism to orchestrate media playback on different devices. This sounds easy but very often very hard to do in practice, especially over different devices (iPad, Smart TV, PI…). One challenge is the delay. When we send commands (such as “START PLAYING”) to another device, your application takes some time to code and pack the information into manageable chunks, which then take some time sitting in a queue and waiting to be serialised as packets for network distribution. Will your packets then travel at the speed of light? Not quite, yet still at 177,000 km/s (59% the speed of light) in Ethernet cables. But the Internet is not an empty highway, your packets will “bump into” others at network nodes such as routers or switches, which simply means they’ll probably sit in the queue again and wait for their turn. When the packets finally arrive at the receiver, they must then go through the network interface card, driver, TCP/IP stack, and any application that control the media playback, before your command can be executed… The whole process, after all the waiting, may only take a few hundred milliseconds. Not bad. OK, let’s make things easier for ourselves and put all the device very close to each other on the same network, then the overall delay is probably just under 100 milliseconds (1/10 of a second). Sounds good, right? Surely, it’s ok for one speaker to lag the other for 100 milliseconds???

What did our recent research say about the human perception of latency in an inter-destination audio-visual test?

20-40 milliseconds perceivable.

60-100 milliseconds annoying.

Oops….

If you are thinking “No worries, let’s actively measure all those delays (serialisation delay, queuing delay, propagation delay, processing delay, reply delay, etc.) which our messages encountered and compensate them when we execute the command.”, then you are with the group of “mad” researchers in the MediaSync community, who’ve spent many years investigating the sources of Internet delays and the feasible ways to measure them.

To give an overview of different packet probing methods to estimate delay and available bandwidth (and to show how such measurements can really make or break a cool media application), Hans and I are now finalising a manuscript for a Springer book chapter.

It looks like we are going over the 25-page limit though…

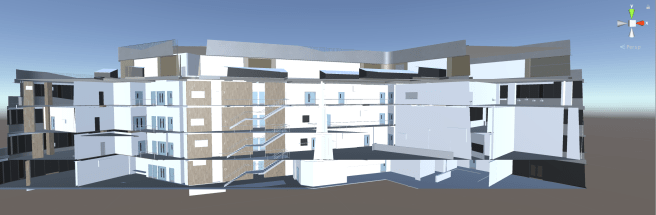

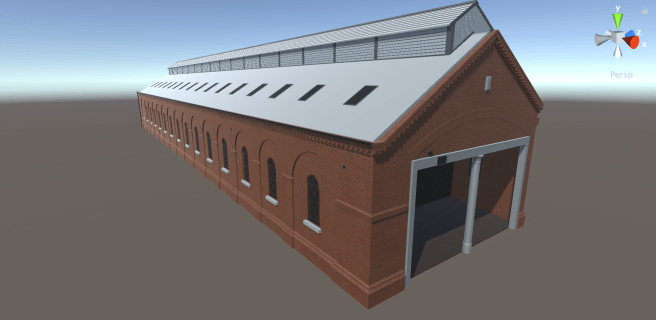

Transforming online learning experience using virtual reality and gamification