I had the great pleasure of joining a Westminster Higher Education Forum event today as a speaker. My session was chaired by the Labour MP Mr Alex Sobel and the main theme of the session is around the opportunities and challenges in adopting new technologies in colleges and universities in the UK. The venue was packed with 100+ delegates from over 60 institutions and businesses across England. I spoke about our research findings on the use of VR in education and shared my views on how technologies can empower human educators in Education 4.0. The following are my notes. The official transcripts from all speakers will be available on Westminster website.

Virtual reality in its early days was mainly used for industrial simulation and military training in a controlled environment using specialised equipment. As technologies become more accessible, we started to see more use of VR in gaming and education. In education, VR is mostly used as a stimulus to enhance students engagement and learning experience. It helps visual learners, breaks barriers, and can visualise things that are hard to imagine. So we are mostly encapsulating on the indirect benefit.

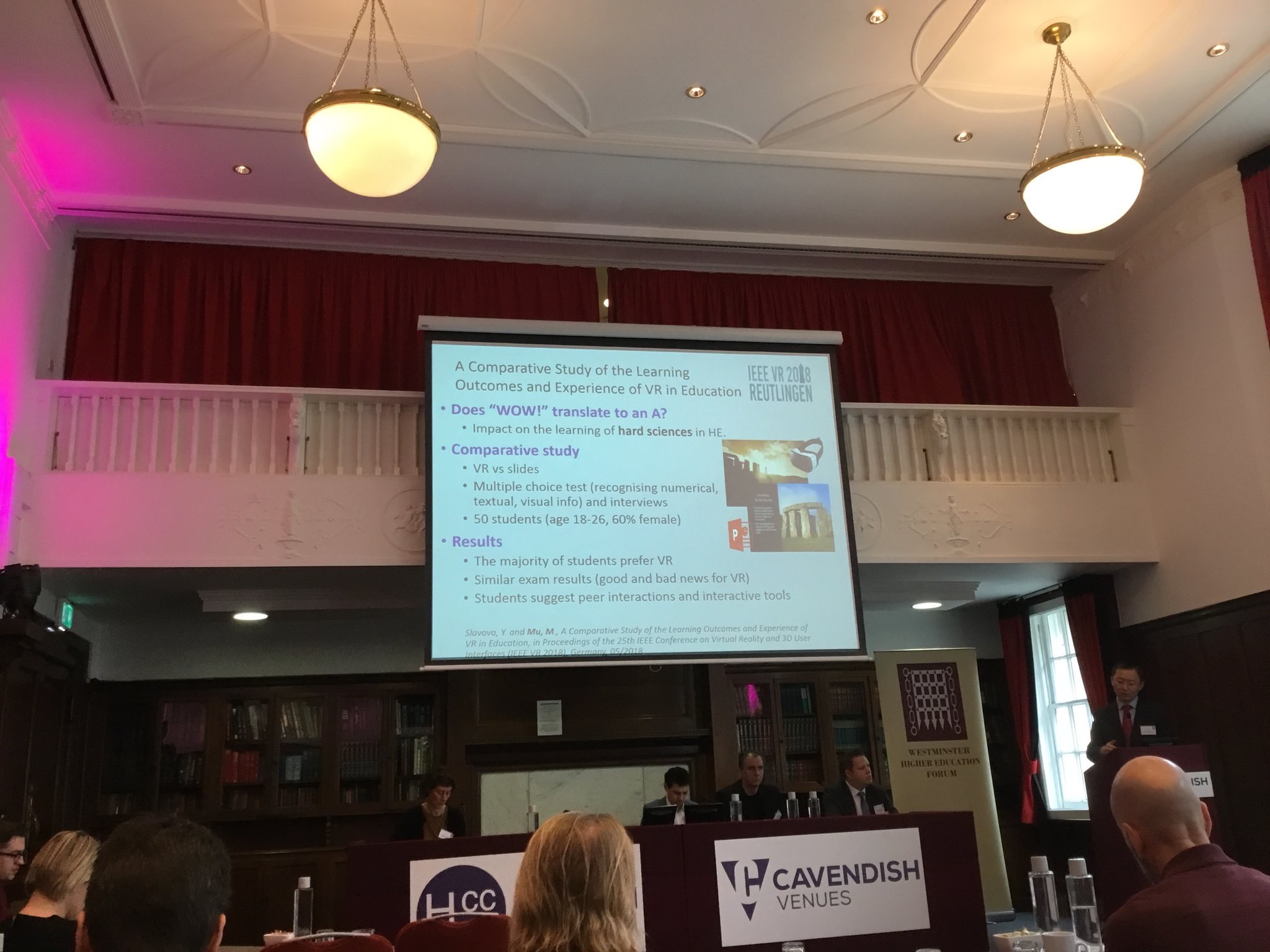

My research group is interested in whether such stimuli can truly improve learning outcome and how, so we know how to improve the technology or use it more appropriately. We conducted an experiment with two groups of university students to compare how well they learn hard sciences using VR materials and Powerpoint slides. Their performance was measured using a short exam paired with interviews. The results suggest that the majority of students prefer learning in VR but there is no significant difference between the two on average scores. A recent research by Cornell University shows a similar finding. However, When we look at the breakdown of scores on individual questions we discovered that students who studied via VR can do very well with questions related to visual information recognition but they struggled to recall numerical and textual details such as the year and location of an event. We think its due to how information is presented and the extra cognitive load in VR. So VR made something better but others worse, it’s a double-edged sword.

This does not mean what VR is a waste of money. We need more work to learn how to better use the tool. This means two things: One, we need VR to be more accessible. Not only its cost but more importantly easy-to-use design tools and open libraries that help average lectures to embrace the technology. We also need appropriate metrics and measurement tools to access the actual impact of new technologies, and share that experience with the community.

Furthermore, we need to keep eyes on what roles VR should take in education. One thing we can learn from the past is Powerpoint in education. (Powerpoint was invented in 1980s, acquired by Microsoft, and went on to become one of the most commonly used tools in business and education. Powerpoint has drastically changed how teaching is done in classrooms. ) Powerpoint was meant to augment a human presenter but it has become a main delivery vehicle in classroom while lecturers are the operators or narrators of slides. People conclude that Powerpoint has not empowered academia. (Some institutes have banned teachers using Powerpoint. According to NYTimes, similar decisions were also made in the US Armed Forces because they regard it as a poor tool for decision-making.) Many institutes including University of Northampton are moving away from pure slideshow to active and blended learning and use data sciences and smart campus to support hands-on, experimental and interactive learning. So we can certainly learn from the past when we approach VR and other new technologies.

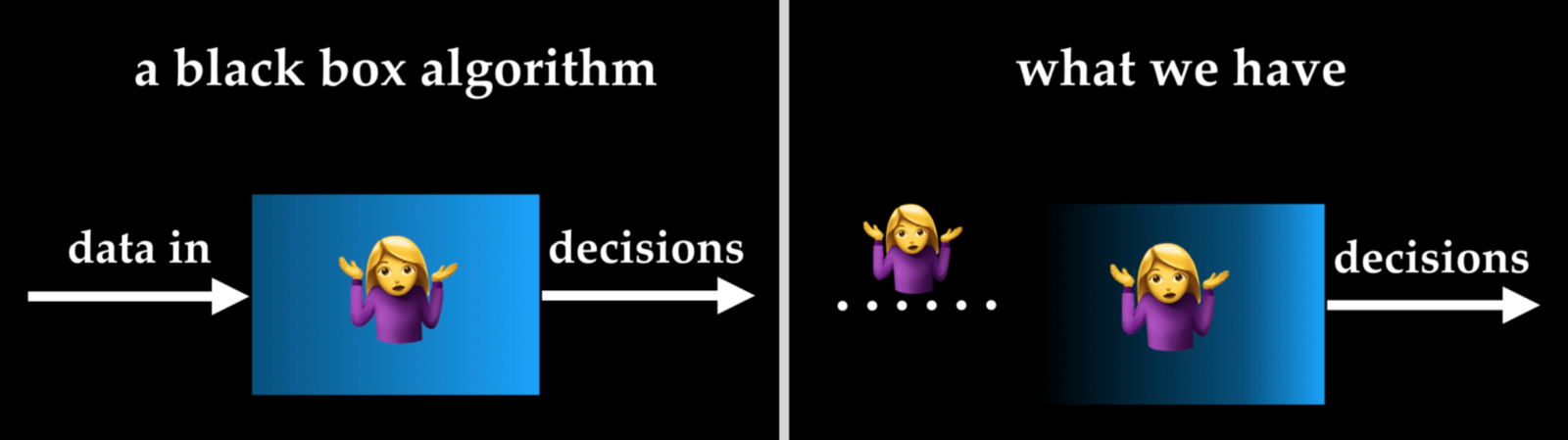

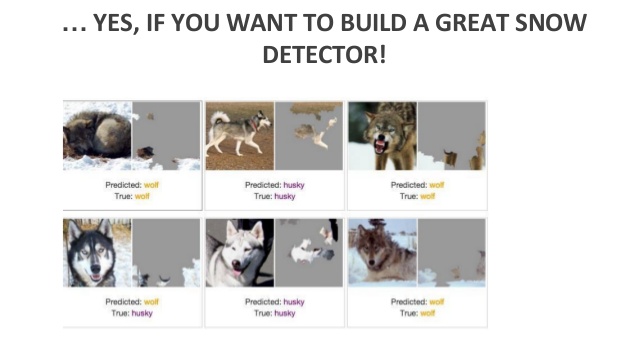

Another important aspect is the human factor. At the end of the day, only human educators are accountable to the teaching process. We listen to what learners say, observe their emotions, sympathise with their personal issues and I reason with them for every decision I made while trying to be as fair as possible. My team is work on many computer science research topics related to human factors such as interpretable machine learning, understanding human intent. However new technologies such as VR and AI should be designed and integrated to empower human educators rather than replacing us.